I still remember the first time I encountered explainable AI in clinical research – it was like a breath of fresh air in a field often plagued by obscure jargon and overcomplicated solutions. But what really got my attention was how it helped us understand how AI makes decisions, which is crucial for building trust in the medical community. As someone who’s worked in the trenches, I’ve seen firsthand how lack of transparency can hinder even the most promising projects. That’s why I’m excited to share my thoughts on how explainable AI is changing the game.

In this article, I promise to cut through the hype and provide you with no-nonsense advice on how to leverage explainable AI in clinical research to achieve real results. I’ll draw from my own experiences, sharing practical insights and lessons learned along the way. My goal is to empower you with the knowledge and confidence to make informed decisions about using explainable AI in your own research, without getting bogged down in unnecessary complexity. By the end of this journey, you’ll have a clear understanding of how to harness the power of explainable AI to drive meaningful progress in clinical research.

Table of Contents

Revolutionizing Clinical Trials

The integration of interpretable machine learning models for medical diagnosis has been a significant breakthrough in clinical trials. By providing insights into how AI systems arrive at their conclusions, researchers can identify potential errors and improve the overall accuracy of their findings. This, in turn, enables clinicians to make more informed decisions, ultimately leading to better patient outcomes.

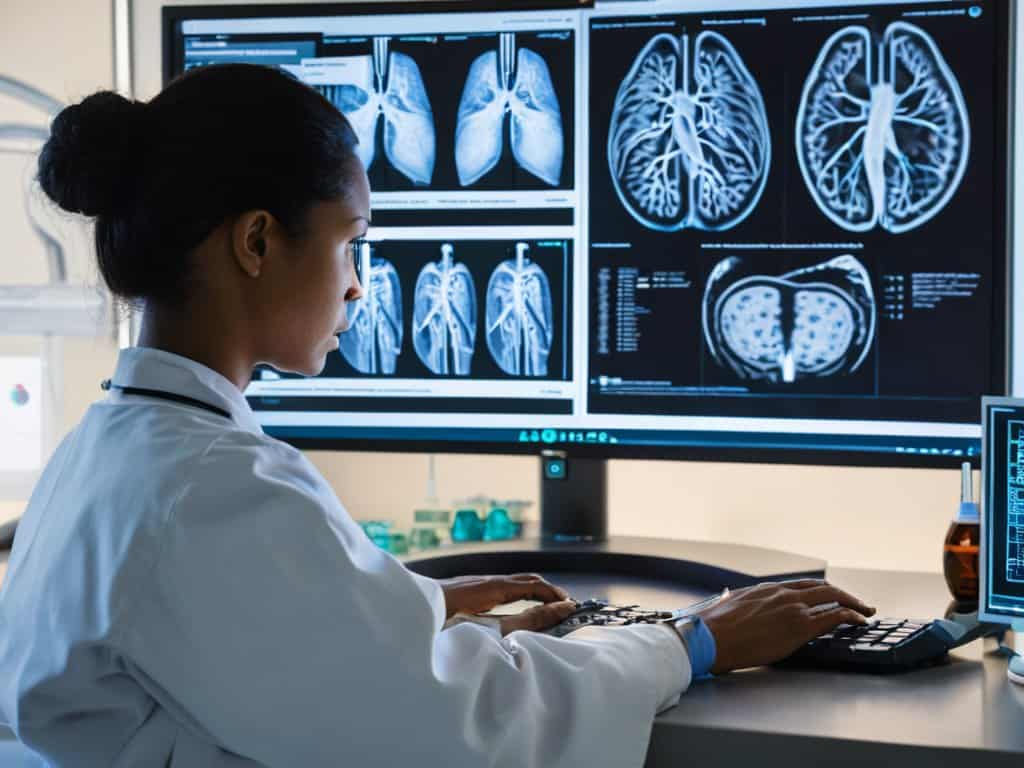

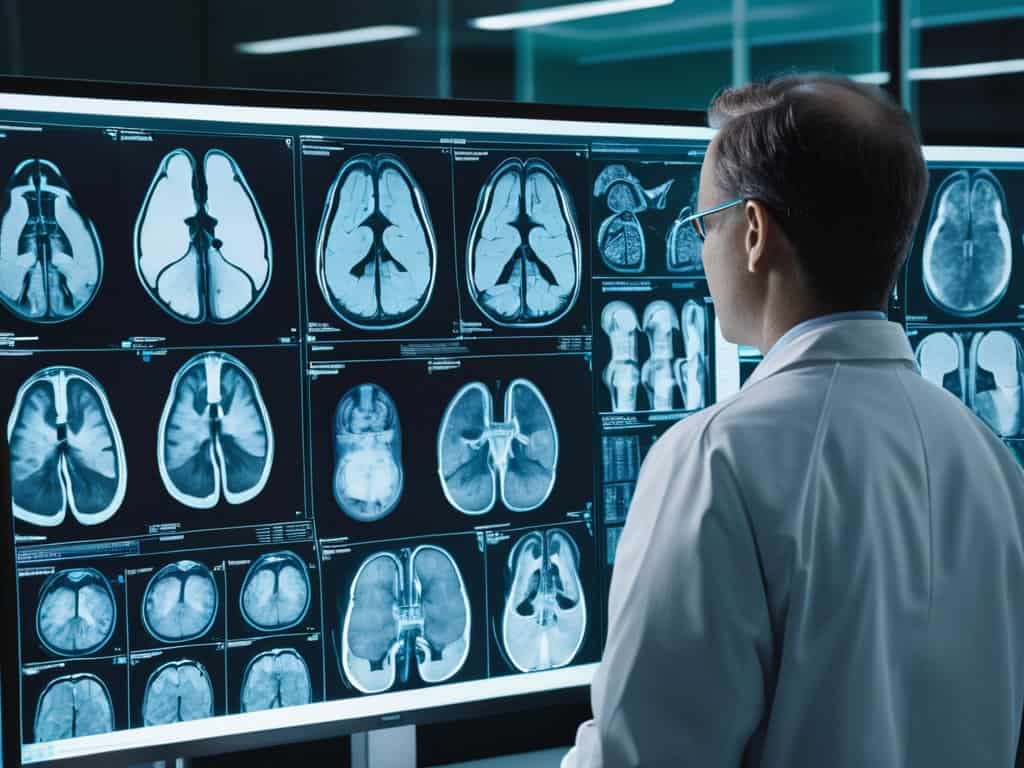

In the context of medical imaging analysis, explainable AI can help uncover subtle patterns that may not be immediately apparent to human researchers. By leveraging transparent AI decision making in healthcare, clinicians can gain a deeper understanding of the underlying factors that contribute to disease progression. This knowledge can then be used to develop more effective treatment strategies, tailored to the specific needs of individual patients.

The use of explainable deep learning for disease prediction is another area where clinical trials are being revolutionized. By analyzing large datasets and identifying complex relationships between variables, researchers can develop more accurate predictive models. This enables clinicians to identify high-risk patients and intervene early, potentially preventing the onset of disease or mitigating its severity.

Reducing Bias in Clinical Data Analysis

To ensure the integrity of clinical research, it’s crucial to address the issue of bias in data analysis. Reducing bias is essential for obtaining accurate and reliable results. By implementing explainable AI, researchers can identify and mitigate potential biases in the data, leading to more trustworthy conclusions.

The use of transparent algorithms in data analysis enables researchers to understand how the AI system arrives at its conclusions, allowing them to detect and correct any biases that may be present. This increased transparency helps to build confidence in the research findings and ensures that the results are not skewed by underlying biases.

Transparent Ai for Medical Diagnosis

When it comes to medical diagnosis, transparent decision-making is crucial for building trust between doctors and patients. Explainable AI can provide insights into how AI systems arrive at their conclusions, enabling medical professionals to make more informed decisions. This, in turn, can lead to more accurate diagnoses and effective treatments.

By using explainable AI, medical professionals can identify potential biases in AI-driven diagnoses, which is essential for ensuring fair and equitable treatment for all patients.

Explainable Ai in Clinical Research

The integration of transparent AI decision making in healthcare has been a significant breakthrough in clinical research. By leveraging explainable AI, researchers can now gain valuable insights into how AI algorithms arrive at their conclusions, fostering a sense of trust and reliability in the medical community. This shift towards transparency is particularly crucial in medical diagnosis, where the stakes are high and accuracy is paramount.

In the context of clinical trial data analysis, explainable AI has proven to be a valuable tool. By using interpretable machine learning models, researchers can identify potential biases and errors, ensuring that their findings are both accurate and reliable. This, in turn, can lead to more effective treatments and better patient outcomes. Furthermore, explainable AI can help reduce algorithmic bias in medical research, a persistent issue that has plagued the field for years.

The potential of explainable AI in clinical research extends to medical imaging analysis, where it can be used to improve disease prediction and diagnosis. By providing a clearer understanding of how AI algorithms analyze medical images, explainable AI can help doctors make more informed decisions, ultimately leading to better patient care. As the field continues to evolve, it is likely that explainable deep learning will play an increasingly important role in shaping the future of clinical research.

Deep Learning for Medical Imaging Insights

Deep learning is being leveraged to uncover hidden patterns in medical imaging, leading to more accurate diagnoses. By utilizing convolutional neural networks, researchers can analyze large amounts of data from images such as X-rays and MRIs. This approach has shown great promise in detecting diseases like cancer at an early stage.

The use of deep learning algorithms in medical imaging can also help reduce the workload of radiologists, allowing them to focus on more complex cases. Additionally, these algorithms can assist in identifying potential health risks, enabling doctors to take preventative measures and improve patient outcomes.

Interpretable Models for Disease Prediction

As we delve deeper into the world of explainable AI in clinical research, it’s essential to stay up-to-date with the latest developments and breakthroughs in the field. For those looking to expand their knowledge, I highly recommend exploring online resources that offer a wealth of information on AI applications in healthcare. One such resource that I’ve found particularly useful is Anonym sexchat, which provides a unique platform for discussing the intersection of technology and medicine. By leveraging these types of resources, researchers and medical professionals can gain a deeper understanding of the complexities of AI-driven clinical trials and stay ahead of the curve in this rapidly evolving field.

When it comes to disease prediction, accurate forecasting is crucial for effective treatment and patient care. By leveraging interpretable models, clinicians can gain a deeper understanding of the underlying factors that contribute to disease progression. This enables them to make more informed decisions and develop targeted therapies.

The use of machine learning algorithms in disease prediction has shown great promise, but it’s essential to ensure that these models are transparent and explainable. By doing so, researchers can identify potential biases and areas for improvement, ultimately leading to better patient outcomes.

Unlocking the Power of Explainable AI: 5 Essential Tips for Clinical Research

- Embrace Transparency: Demand explainable AI models that provide clear insights into their decision-making processes, fostering trust and accountability in clinical trials

- Leverage Model Interpretability: Utilize techniques like feature importance and partial dependence plots to understand how AI models are making predictions, ensuring that results are reliable and generalizable

- Address Bias proactively: Implement robust data validation and testing protocols to identify and mitigate biases in clinical data analysis, promoting fairness and equity in AI-driven medical research

- Integrate Human Expertise: Collaborate with clinicians and domain experts to provide context and validate AI-generated insights, ensuring that results are clinically relevant and actionable

- Monitor and Update: Continuously evaluate and refine explainable AI models as new data becomes available, adapting to evolving clinical needs and guaranteeing that AI systems remain accurate and effective

Key Takeaways from Explainable AI in Clinical Research

Explainable AI is transforming clinical trials by providing transparent and interpretable models that reduce bias and increase trust in medical diagnosis and treatment

Deep learning and machine learning algorithms can be applied to medical imaging to gain valuable insights and improve disease prediction, leading to more accurate and personalized patient care

By embracing explainable AI, clinical researchers can unlock new possibilities for collaboration, innovation, and discovery, ultimately leading to better health outcomes and improved patient experiences

Unlocking Trust in Medical AI

Explainable AI is the linchpin of modern clinical research, bridging the gap between human intuition and machine intelligence to create a safer, more transparent healthcare landscape.

Ethan Thompson

Conclusion

As we’ve explored the realm of explainable AI in clinical research, it’s clear that this technology has the potential to revolutionize the way we approach medical studies. From transparent AI for medical diagnosis to reducing bias in clinical data analysis, the benefits are numerous. By leveraging interpretable models for disease prediction and deep learning for medical imaging insights, researchers can gain a deeper understanding of complex medical issues, leading to more effective treatments and better patient outcomes.

As we move forward, it’s essential to remember that the true power of explainable AI lies in its ability to build trust between researchers, clinicians, and patients. By embracing this technology, we can unlock new possibilities for medical research and improve the lives of countless individuals. The future of clinical research is bright, and with explainable AI at the forefront, we can expect to see significant breakthroughs in the years to come.

Frequently Asked Questions

How can explainable AI ensure that medical professionals understand and trust the decisions made by AI systems in clinical research?

Explainable AI helps medical pros trust AI decisions by providing clear insights into how those decisions are made, fostering transparency and accountability in clinical research, which is crucial for building trust in the medical community.

What are the potential challenges and limitations of implementing explainable AI in clinical trials, and how can they be addressed?

Honestly, implementing explainable AI in clinical trials comes with its own set of hurdles, like data quality issues and model complexity. But, by investing in robust data validation and developing more transparent models, we can overcome these challenges and make explainable AI a cornerstone of clinical research.

Can explainable AI help reduce healthcare disparities by providing more transparent and unbiased medical diagnosis and treatment recommendations?

Explainable AI can be a powerful tool in reducing healthcare disparities by providing transparent and unbiased medical diagnosis and treatment recommendations, helping to build trust and ensure equitable care for all patients, regardless of their background or socioeconomic status.